Personalized Audio in 2026: The Intelligent Shift Beyond Static Presets

For years, audio customization meant choosing a label.

“Bass Boost.”

“Vocal.”

“Rock.”

“Podcast.”

These presets were static assumptions about taste. They grouped millions of listeners into a handful of equalizer curves and called it personalization.

That era is fading.

Personalized audio is replacing presets — not by adding more options, but by removing the need for manual selection altogether. Instead of asking what you prefer, modern systems are learning how you hear.

And that distinction changes everything.

Why Presets Were Always a Compromise

Presets were built on genre stereotypes, not individual perception.

A “Bass Boost” curve assumes everyone wants deeper sub-bass and slightly recessed mids. A “Vocal” preset assumes speech clarity requires identical frequency shaping for all listeners. But human hearing varies dramatically based on:

- Age-related frequency sensitivity

- Ear canal shape

- Listening volume habits

- Music preference patterns

- Environmental context

Two people listening to the same track through identical headphones do not hear the same sound. Presets never accounted for that biological variance.

They simplified personalization into categories.

Modern personalized audio systems treat listening as biometric data instead.

How Personalized Audio Actually Works

At a technical level, personalization systems combine three layers:

- Hearing Profile Calibration

Users complete tone-detection or response-based tests. The system maps sensitivity across frequencies, identifying dips or peaks in perception. - Behavioral Learning

Algorithms observe listening habits — volume adjustments, skipped tracks, replayed sections — to refine tuning preferences. - Real-Time Environmental Adaptation

Ambient noise and context influence frequency shaping dynamically.

The result is not a fixed EQ curve. It is a continuously updated listening profile.

That’s the defining shift: personalized audio is dynamic, not static.

Preset vs Personalized: A Structural Comparison

| Approach | Preset-Based Audio | Personalized Audio |

|---|---|---|

| Basis | Genre assumption | Individual hearing profile |

| Adjustment | Manual selection | Automated calibration |

| Frequency Tuning | Fixed EQ curve | Dynamic, adaptive shaping |

| Context Awareness | None | Environmental integration |

| Longevity | Static over time | Evolves with usage |

Presets assume taste is universal.

Personalized audio assumes perception is individual.

That philosophical difference drives the engineering direction of modern audio platforms.

The Psychological Impact of Personal Tuning

Listeners often describe personalized systems as “clearer” or “more natural.” Interestingly, the change is rarely dramatic in isolation. Instead, it removes subtle friction.

Vocals sit forward without harshness.

Bass feels present without overwhelming midrange detail.

High frequencies shimmer without fatigue.

Because the tuning reflects personal hearing thresholds, the brain expends less effort compensating for frequency imbalances. Listening becomes cognitively smoother.

This is why personalized audio often feels less processed than aggressive preset EQ — even though it relies on more computational intelligence.

The goal is invisibility, not coloration.

Why This Shift Is Happening Now

Several forces accelerated the move away from presets:

- On-device processing power now supports continuous DSP refinement.

- Machine learning models can operate locally without latency.

- Consumer expectation has shifted toward adaptive technology in every category.

- Health awareness has increased demand for safe, hearing-conscious tuning.

When devices already adjust brightness, refresh rate, and power management automatically, static audio profiles feel outdated.

Sound is becoming part of the adaptive system stack.

The Industry Implication

Audio brands are gradually repositioning themselves around intelligence rather than pure acoustic hardware.

Driver size, material quality, and enclosure tuning still matter deeply. But the competitive edge increasingly lies in:

- Algorithm sophistication

- Calibration accuracy

- Cross-device profile syncing

- Context-aware DSP tuning

Hardware defines potential.

Software unlocks personalization.

As personalized audio matures, differentiation will hinge less on how powerful a driver is and more on how precisely it is tuned to the individual wearing it.

A Quiet Challenge: Identity vs Accuracy

There is an interesting tension emerging.

Traditional audio brands built identity around signature sound — warm, neutral, analytical, V-shaped. Personalized systems flatten those identities in favor of listener optimization.

If every product adapts uniquely, brand sound signatures may become less distinct.

The trade-off becomes clear:

Do you preserve a house sound, or do you prioritize listener-specific accuracy?

Personalized audio leans toward the latter.

Listening, Recalibrated

The movement away from presets reflects a broader transformation in consumer technology. Systems no longer ask users to adapt to them. They adapt to users.

Personalized audio is not about louder bass or sharper treble. It is about aligning digital reproduction with biological hearing. That alignment reduces fatigue, improves clarity, and subtly enhances immersion.

Presets categorized listeners.

Personalization understands them.

As computational power continues to merge with acoustic engineering, static EQ labels will feel increasingly archaic.

The future of sound isn’t a menu.

It’s a profile.

And that profile evolves with you.

Stay in Sync with Vibetric

- Follow our Instagram @vibetric_official for deep dives into audio intelligence.

- Bookmark vibetric.com to track next-gen listening tech.

- Stay updated as personalized audio reshapes modern sound.

What’s your take on this?

At Vibetric, the comments go way beyond quick reactions — they’re where creators, innovators, and curious minds spark conversations that push tech’s future forward.

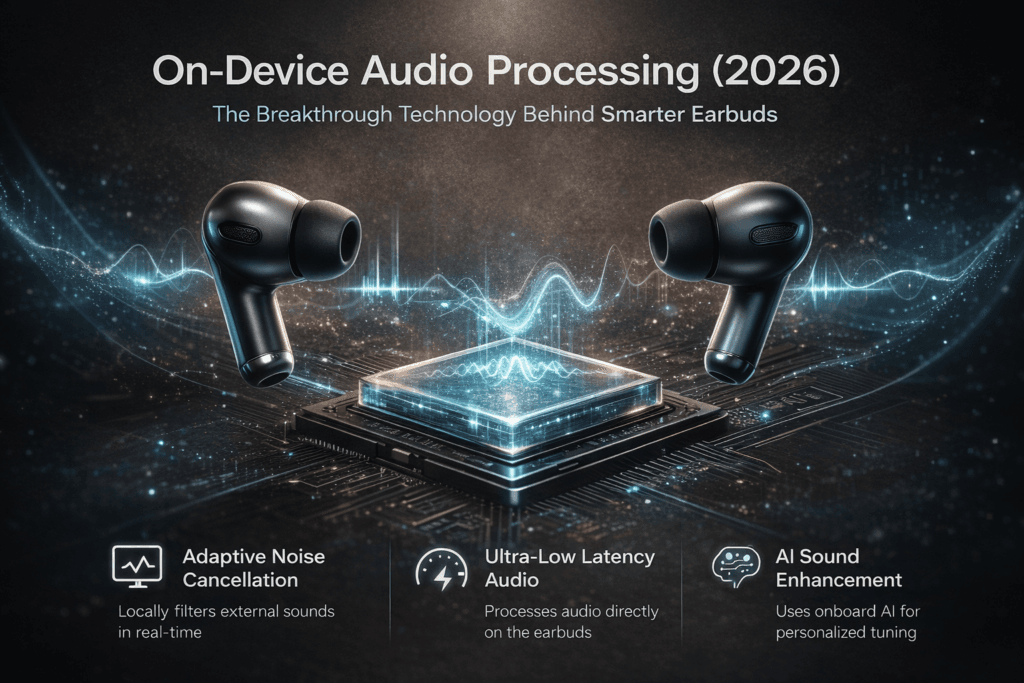

On-Device Audio Processing (2026): The Breakthrough Technology Behind Smarter Earbuds

On-Device Audio Processing (2026): The Breakthrough Technology Behind Smarter Earbuds A commuter taps play on the subway. The train roars into the

AI Battery Optimization Is Finally Making Earbud Battery Life Reliable in 2026

AI Battery Optimization Is Revolutionizing Earbud Battery Life in 2026 The spec sheet still says ‘30 hours.’ What it doesn’t say is