AI Gaming Hardware in 2026: The Intelligent Shift Redefining Performance

For decades, gaming hardware evolved in predictable increments: more GPU cores, higher clock speeds, faster memory bandwidth. Each generation promised sharper textures and higher frame rates.

That trajectory still exists — but it’s no longer the defining story.

The rise of AI gaming hardware signals a structural pivot. Graphics systems are transforming from brute-force rendering engines into hybrid compute platforms that combine rasterization with machine learning. The result isn’t just more frames. It’s smarter frames.

And that changes how performance is measured.

Latency and Stability Are the New Battleground

While visual fidelity grabs attention, the deeper transformation is happening beneath the surface.

AI models are increasingly applied to frame pacing, resource allocation, and input prediction. Instead of reacting to workload spikes, systems can anticipate them. That predictive layer reduces stutter and stabilizes performance during complex scenes.

Console ecosystems developed by companies like Sony and Microsoft now incorporate machine-learning-assisted scheduling to streamline asset streaming and scene transitions.

Immersion isn’t just about how a game looks.

It’s about how consistently it responds.

From Pixel Pushing to Intelligent Reconstruction

Traditional rendering demanded that every pixel be calculated natively. Higher resolutions required exponentially more power.

AI gaming hardware reframes that equation.

Neural networks can upscale lower-resolution frames into higher-resolution outputs with impressive clarity. Instead of brute-force rendering, systems reconstruct detail using trained models. Companies such as NVIDIA and AMD have embedded AI-driven upscaling and frame generation into their architectures, shifting emphasis from raw throughput to perceptual optimization.

This approach reduces strain on hardware while maintaining — and sometimes enhancing — visual fidelity.

The goal isn’t rendering everything.

It’s rendering intelligently.

How the Performance Model Is Quietly Changing

The philosophical shift becomes clearer when comparing design priorities:

| Legacy Graphics Focus | AI Gaming Hardware Focus |

|---|---|

| Native high-resolution rendering | AI-assisted upscaling |

| Fixed frame output | Frame generation & interpolation |

| Manual graphics presets | Dynamic AI workload balancing |

| Pure raster pipelines | Hybrid raster + neural pipelines |

Performance is increasingly defined by how convincingly detail is reconstructed rather than how expensively it is computed.

Perception becomes the optimization target.

Developers Gain — and Adapt

For studios, AI gaming hardware offers both flexibility and responsibility.

By targeting lower base resolutions, developers can redirect compute resources toward physics simulations, advanced lighting systems, or larger world maps. AI-assisted animation blending and procedural systems reduce repetitive workload during development.

However, hardware-specific AI features require careful cross-platform optimization. A neural upscaling method tuned for one architecture may behave differently on another. Balancing artistic vision with algorithmic reconstruction demands technical precision.

AI expands creative possibility.

It also complicates the pipeline.

The Limits of Automation

It’s important to temper enthusiasm.

AI upscaling and frame generation rely on probabilistic models. Under certain motion conditions, artifacts can emerge. Frame interpolation may improve perceived smoothness but introduce subtle latency trade-offs. Competitive gamers, in particular, remain sensitive to responsiveness.

AI gaming hardware enhances capability, but it does not eliminate the need for strong baseline silicon.

Intelligence supports performance.

It doesn’t replace it.

Beyond Visuals: Toward Adaptive Gameplay

The most transformative potential of AI gaming hardware may extend beyond graphics.

Machine learning models are increasingly influencing non-player character behavior, procedural world generation, and adaptive difficulty balancing. Systems can analyze player behavior patterns and subtly adjust pacing or challenge curves.

In this context, hardware becomes more than a rendering device. It becomes a platform for dynamic world simulation.

As neural accelerators become standard, the boundary between game design and silicon architecture tightens.

The Efficiency Imperative

There is also an economic dimension.

As graphical ambition grows, so do power consumption and cooling requirements. AI-assisted reconstruction improves performance per watt, reducing the need for exponential hardware scaling.

Efficiency is becoming strategically important. Intelligent reconstruction allows manufacturers to push visual fidelity without equally aggressive increases in thermal output.

That balance keeps systems sustainable — physically and economically.

Where This Evolution Leads

AI gaming hardware is redefining what “next generation” actually means. It’s no longer purely about teraflops or shader counts. It’s about how effectively machine learning enhances perception, smoothness, and responsiveness.

The future of gaming performance lies in balance — raw compute paired with adaptive intelligence.

The performance race was once about rendering more.

The next race is about rendering smarter.

And that shift is already underway.

Stay Synced with Vibetric

- Follow our Instagram @vibetric_official for perspective-driven analysis on AI gaming hardware trends.

- Bookmark vibetric.com to track how intelligent rendering is reshaping play.

- Check back for structured insight into the evolving architecture of immersive systems.

What’s your take on this?

At Vibetric, the comments go way beyond quick reactions — they’re where creators, innovators, and curious minds spark conversations that push tech’s future forward.

Frame Stability in 2026: The Hidden Metric Redefining Gaming Performance

Frame Stability in 2026: The Hidden Metric Redefining Gaming Performance For years, gaming conversations revolved around one number: frames per second. Higher

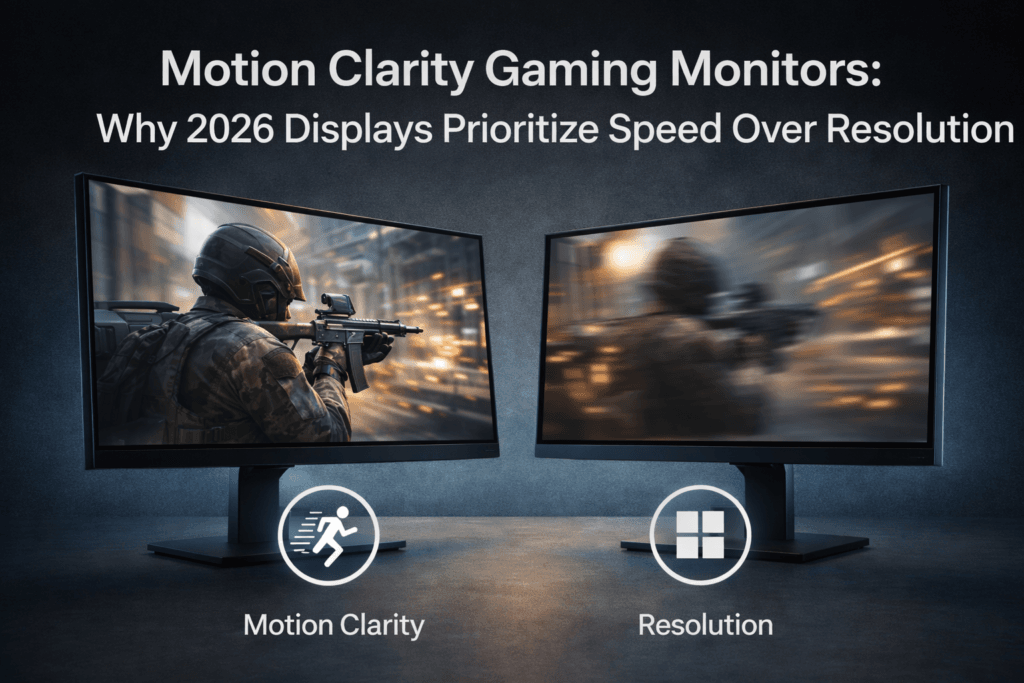

Motion Clarity Gaming Monitors: Why 2026 Displays Prioritize Speed Over Resolution

Motion Clarity Gaming Monitors: Why 2026 Displays Prioritize Speed Over Resolution For years, gaming monitor marketing revolved around one number: resolution. Full