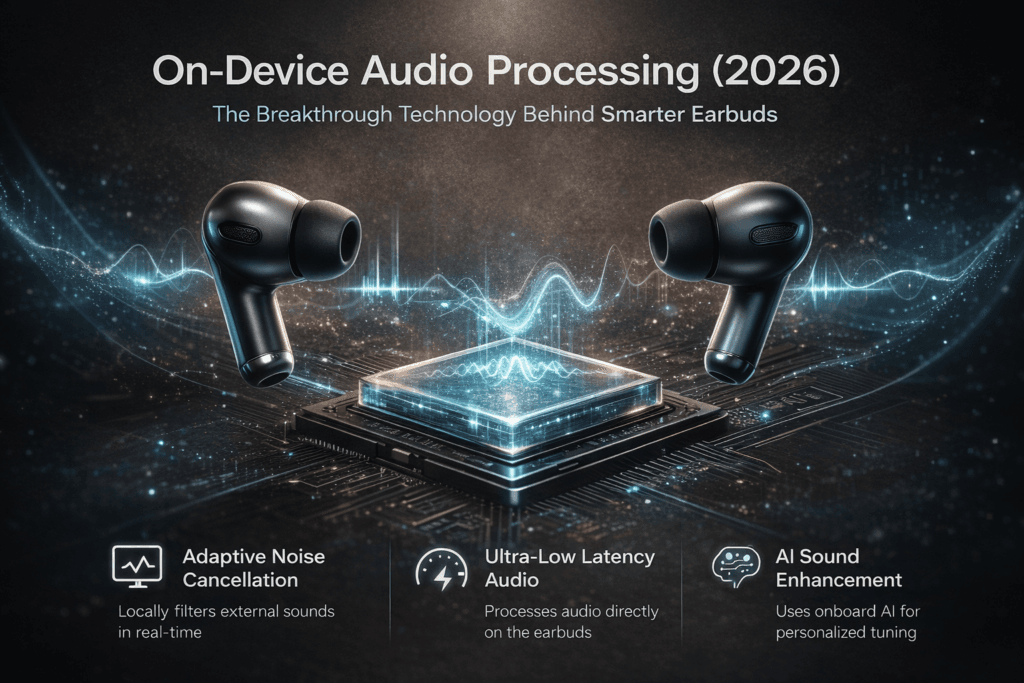

On-Device Audio Processing (2026): The Breakthrough Technology Behind Smarter Earbuds

A commuter taps play on the subway. The train roars into the station, conversations swell around them, and the platform fills with echoes. Yet somehow the music remains crisp, vocals centered, and background chaos fades into silence. Nothing changed on the phone. No settings were adjusted.

What actually changed lives inside the earbuds themselves.

The newest generation of flagship earbuds is quietly shifting from passive audio accessories into miniature computing systems. The real revolution isn’t louder drivers or stronger noise cancellation—it’s on-device audio processing, a technological shift that lets earbuds analyze sound locally in real time. And once you understand how it works, you start noticing just how dramatically it’s changing the listening experience.

When Earbuds Stopped Being Speakers and Became Computers

For years, wireless earbuds mostly acted as small playback devices. The phone or streaming service handled the heavy work—compression, spatial rendering, and voice processing. Earbuds simply reproduced the signal they received.

But as wireless audio matured, manufacturers faced a bottleneck. Bluetooth bandwidth, latency, and cloud processing limits were preventing the next leap in sound quality and responsiveness.

The solution: move the intelligence closer to your ears.

Modern flagship earbuds now integrate specialized chips capable of running on-device audio processing algorithms directly within the earbuds themselves. Instead of waiting for the phone or cloud to analyze audio signals, the earbuds process environmental noise, spatial cues, and voice signals locally.

This shift dramatically reduces latency and unlocks features that previously required far more computing power.

In practical terms, earbuds have evolved from accessories into dedicated audio processors.

The Technology Quietly Running Inside Your Earbuds

To understand why on-device audio processing matters, you have to look at what happens between the microphone and the speaker driver.

Inside a modern earbud, multiple sensors and microphones constantly capture acoustic information. Dedicated digital signal processors (DSPs) then interpret this data in real time.

Instead of applying static audio filters, these processors dynamically adapt to what they detect.

A simplified breakdown looks like this:

| Component | Role in On-Device Processing |

|---|---|

| Multiple microphones | Capture external noise and voice signals |

| DSP audio chip | Runs adaptive filtering algorithms |

| Runs adaptive filtering algorithms | Distinguish speech from background noise |

| Spatial audio engine | Reconstructs directional sound |

| Low-latency memory | Maintains real-time processing loops |

All of these steps occur within milliseconds.

The result: the earbuds adjust the sound before it even reaches your ears.

The Misconception That Audio Quality Comes From Drivers Alone

Most marketing around earbuds still focuses on driver size and codec support. While both matter, they only tell part of the story.

The truth is that on-device audio processing increasingly determines how audio actually sounds.

A well-tuned algorithm can dramatically improve clarity, separation, and perceived detail—even with modest hardware. Conversely, poorly implemented processing can flatten dynamics or introduce artifacts.

Three key technologies illustrate this shift:

- Adaptive Noise Intelligence

Traditional noise cancellation relies on predictable patterns—engine hum, airplane noise, HVAC systems. But urban environments are chaotic.

Modern earbuds analyze noise patterns locally and update filtering models continuously. The earbuds learn what to cancel and what to preserve.

This is only possible because on-device audio processing allows the earbuds to evaluate thousands of acoustic data points per second.

- Personalized Sound Profiles

Some flagship earbuds now scan the listener’s ear canal acoustics and build a customized EQ curve. Since ear shapes vary widely, personalized tuning can significantly improve perceived clarity.

Running these models locally ensures instant adjustment without sending biometric audio data to external servers.

- Ultra-Low Latency Spatial Audio

Spatial audio used to rely heavily on phone processors. But newer systems move much of the rendering pipeline into the earbuds.

That means head tracking and directional audio remain synchronized even when devices switch between apps or operating systems.

Why Cloud Audio Processing Was Never the Ideal Solution

At first glance, cloud-based audio enhancement sounds appealing. Offload processing to powerful servers and stream back improved sound.

But audio doesn’t behave well with long delays.

Even small latency increases can disrupt synchronization between video and sound. Voice calls become awkward. Interactive audio experiences feel disconnected.

On-device audio processing solves this by eliminating the network entirely.

A simplified comparison illustrates the difference:

| Processing Method | Latency | Privacy | Reliability |

|---|---|---|---|

| Cloud audio processing | Higher | Lower | Dependent on connection |

| Phone-based processing | Moderate | Medium | Device-dependent |

| On-device audio processing | Ultra-low | High | Fully local |

For real-time audio applications—gaming, calls, immersive media—local processing simply works better.

What Everyday Listening Now Feels Like

The improvements enabled by on-device audio processing often appear subtle at first. But across different environments, they add up to a noticeably smarter listening experience.

Consider a few common scenarios.

The Office Call

During video meetings, earbuds must balance two competing priorities: isolate your voice while suppressing background noise.

With advanced local processing, earbuds can detect the acoustic signature of human speech and selectively prioritize it. Keyboard clicks, fan noise, and distant conversations fade away.

The Commute

Urban soundscapes change constantly. Trains arrive, buses idle, traffic surges.

Static noise cancellation struggles here. But on-device audio processing continuously recalculates the filtering model as the environment evolves.

Instead of aggressive cancellation that removes everything, modern earbuds selectively preserve useful sounds like announcements or nearby voices.

The Gym

Movement introduces wind noise and shifting acoustic reflections. Local processing allows earbuds to dynamically adjust microphones and filters depending on motion patterns.

This prevents the sudden audio distortions that older earbuds often produce during workouts.

The Hardware Arms Race Behind the Scenes

As on-device audio processing becomes central to the listening experience, chipset design has become a critical differentiator.

Manufacturers are investing heavily in low-power neural processing units (NPUs) optimized specifically for audio workloads.

Key design priorities include:

| Engineering Goal | Why It Matters |

|---|---|

| Ultra-low power consumption | Extends battery life during constant processing |

| Real-time AI inference | Enables adaptive sound models |

| High-speed memory access | Reduces audio buffering delay |

| Efficient DSP pipelines | Maintains consistent audio fidelity |

The challenge is balancing processing capability with tiny battery constraints.

Earbuds have far less energy available than smartphones, which means every algorithm must be carefully optimized.

Why Listeners Often Notice the Benefits Without Knowing Why

One of the most interesting aspects of on-device audio processing is how invisible it feels.

Most users don’t consciously think about audio algorithms. They simply notice that the earbuds feel more natural to use.

Music sounds clearer during movement. Calls remain intelligible in noisy environments. Spatial audio maintains stability.

This psychological effect matters. The best technology rarely feels technological—it just works.

And when the system responds instantly without requiring user interaction, listeners perceive the device as more intuitive and intelligent.

The Subtle Impact on Creativity and Productivity

Beyond entertainment, on-device audio processing is quietly influencing how people work and create.

For remote professionals, better voice isolation reduces cognitive fatigue during long calls. Clearer audio means less effort spent deciphering speech.

Content creators benefit from improved monitoring accuracy when editing audio or recording voiceovers.

Even casual listeners experience less listening strain during long sessions because adaptive processing maintains consistent tonal balance across environments.

In other words, smarter earbuds don’t just sound better—they reduce the mental effort required to listen.

When This Technology Isn’t Always the Right Choice

Despite its advantages, on-device audio processing isn’t universally perfect.

Audiophiles who prefer a completely unprocessed signal may find aggressive algorithms intrusive. Some systems apply dynamic EQ adjustments that alter the original recording’s character.

Additionally, advanced processing can sometimes introduce subtle artifacts if algorithms misinterpret certain sounds.

These trade-offs highlight an ongoing design challenge: balancing intelligence with transparency.

The best implementations offer adaptive processing without sacrificing authenticity.

What Online Communities Are Saying About Smarter Earbuds

Across audio forums and tech communities, discussions around on-device audio processing often revolve around real-world experience rather than specifications.

A snapshot of common observations reveals interesting patterns.

| User Perspective | Typical Comment |

|---|---|

| Commuters | Notice stronger noise control in chaotic environments |

| Remote workers | Appreciate clearer voice pickup in calls |

| Gamers | Value reduced latency and stable spatial audio |

| Audiophiles | Debate the balance between processing and purity |

| Travelers | Prefer local processing that works offline |

| Fitness users | Like adaptive wind and motion filtering |

| Podcast listeners | Report clearer speech separation |

The consensus isn’t about specs—it’s about consistency. Listeners value earbuds that perform reliably across unpredictable environments.

Where Earbud Audio Is Heading Next

The next wave of on-device audio processing will likely expand far beyond noise cancellation and spatial rendering.

Several emerging developments point toward a more intelligent future:

- Real-time translation processed entirely on earbuds

- Personalized hearing enhancement for mild hearing loss

- Adaptive audio environments that simulate different acoustic spaces

- Context-aware listening modes that adjust based on activity

As processors become more efficient, earbuds may eventually handle complex acoustic modeling previously reserved for studio software.

This evolution could transform earbuds into always-on audio companions rather than simple playback devices.

Vibetric Ending

Back on the subway platform, the commuter removes their earbuds as the train doors open. The city noise floods back instantly.

What felt like normal listening just seconds earlier was actually the result of thousands of tiny calculations happening every moment.

That’s the power of on-device audio processing. It doesn’t demand attention. It simply reshapes the listening experience so smoothly that most people never notice the technology at work.

Yet as earbuds continue to evolve into miniature audio computers, the way we interact with sound is changing in subtle but profound ways.

And this quiet revolution is happening just a few centimeters from our ears.

Curious About the Science Behind Smart Earbuds?

- Follow vibetric_official on Instagram tto keep up with the latest trends and insights into emerging audio technology.

- Bookmark Vibetric.com we continuously update our analysis as new developments reshape the wireless audio landscape.

Subscribe for updates and receive ongoing deep-dive breakdowns on innovations transforming personal listening.

FAQ: On-Device Audio Processing in Earbuds

It refers to audio analysis and enhancement performed directly inside the earbuds using dedicated processors rather than relying on smartphones or cloud servers.

Local processing reduces latency, improves privacy, and allows real-time adaptation to changing environments.

Yes. It allows earbuds to analyze noise patterns instantly and adjust filters dynamically rather than relying on static models.

Often yes, because adaptive algorithms can improve clarity and balance depending on environmental conditions.

Not necessarily. Modern DSP chips are optimized for extremely low power consumption.

Yes. Since data is processed locally, sensitive audio information doesn’t need to be transmitted to external servers.

Many modern systems create custom EQ profiles based on ear shape and listening habits.

Absolutely. It reduces latency and stabilizes spatial audio positioning during gameplay.

The trend suggests yes, particularly for premium models where adaptive audio features are becoming standard.

No. Good drivers still matter, but intelligent processing increasingly determines how effectively that hardware performs.

What’s your take on this?

At Vibetric, the comments go way beyond quick reactions — they’re where creators, innovators, and curious minds spark conversations that push tech’s future forward.